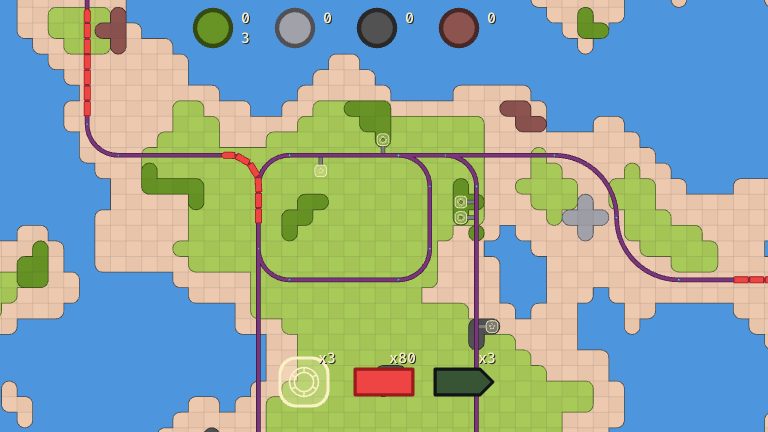

Over the last year and a half, I've made a few prototype games. Two of them were not very good, but one has actually been fun to play. In it you manage a network of trains to gather resources, and deliver them to get upgrades. The small gameplay loop has worked for development, but the 2D boxes have been a little uninspiring, even if they are colourful.

So, I'm taking things into the third dimension! To start, I want to make something that looks like technical drawings used for machining. These are very clean, readable, and best of all: it's an art style that a programmer like me can handle.

To do this we'll need to figure out where the edges are. The easiest way would be to render the 3D scene to a texture, then run a Roberts Cross or Sobel operator to highlight edges, but we can do better by outputting our 3D scene to a custom texture with any information we'd like. The obvious choices for edges are depth or normals, so any change in the face direction or a step in the depth we've hit the edge. However, I'm going to primarily rely on custom section maps to have fine control. These will be created as textures on the model, then any colour change will define an edge using one of our operators. This gives complete control where the edges will appear, at the cost of a little more time in Blender.

The library used for the prototype (Raylib), doesn't offer as much control as I'd like for this, so we'll need to find a new one. After some research, I settled on SDL3. SDL is very stable, and has been around forever. The main advantage SDL3 brings is SDL_GPU as an abstraction layer that sits above Vulkan, Direct3D 12, and Metal. I can use SPIRV to cross compile shaders, then SDL3 will select the best graphics backend at runtime so that I won't have to write shaders for each OS.

Since SDL3 is written in C, and I'm writing my game in go, I'll use Zyko0/go-sdl3 as the go library to interact with SDL3. This uses purego, so that we still get cross compilation benefit of GOOS, and GOARCH. It also wraps the SDL ABI in more idiomatic go (return error instead of bool then fetch error). As a bonus, this allows static linking to SDL very easily.

We'll keep the code, pretty high level since no one wants to go through the pain of setting up shaders.

To set up the project, let's render a triangle using the graphics card.

- Write simple vertex, and fragment shaders. Cross compile shaders with SPIRV, then add them to the build process. On Linux we can't easily cross compile dxil for DirectX, but we'll figure that out later. Does anyone still use Windows anyways?

- Statically link, and initialize SDL3

- Request access to the GPU device, create a new window, and have our GPU device claim the window

- Set up shader: load the appropriate vertex and fragment shaders based on the runtime's backend (DirectX, Vulkan, or Metal), compile them, and send them to the GPU device

- Set up our graphics pipeline to target the swapchain. This acts like a regular texture that we can render to, but its the texture that wil be displayed in our window

- In the game loop we'll acquire a command buffer, wait for the swapchain texture to be available, start a render pass with our graphics pipeline, draw primitives, and submit the command buffer

Skipping details since setting up graphics pipelines always involves a lot of boiler plate code.

We'll load our 3D model, from a GLTF file (.glb). Start by loading vertex, and index data, create buffers for each, transfer our buffers to the GPU, and clean up the mess we just made.

For now, let's just hardcode the view and projection matrices in the shader. We'll figure that out later.

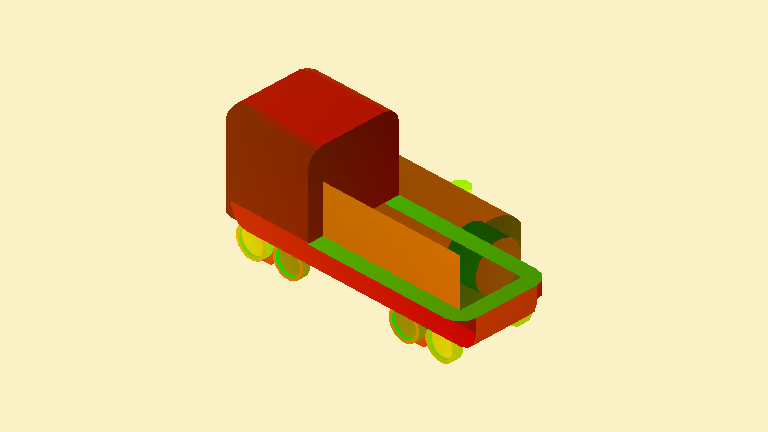

You'll have to concentrate pretty hard to see that as a train.

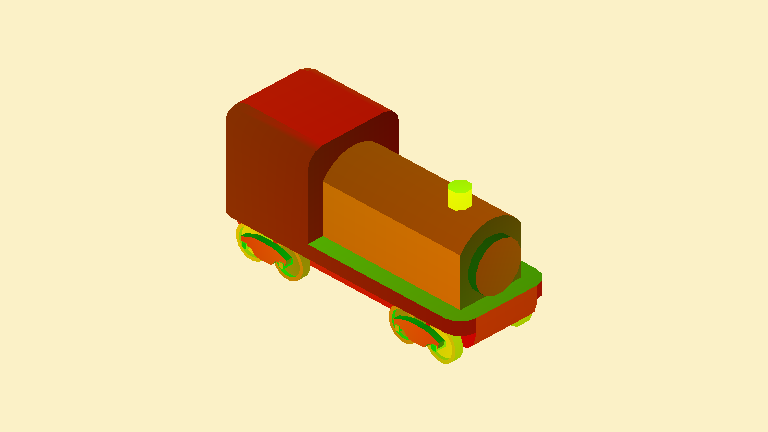

Next we'll load GLTF texture coordinates, and textures, then directly display the UVs on the model where the x coordinate will be shown in red, and y in green. To do this we'll get the texture coordinates from the GLTF file, grab the texture with a matching index, convert the raw data into a pixel buffer. A sampler has to be created, this tells the graphics card how it should interpret a point on the texture. For example, should it blend the colour, or have hard edges. Then send everything over to the GPU using transfer buffers like before.

This shows UVs, but the some triangles from the back are being rendered on top.

Must be that depth buffers are broken, so let's set those up. By now we're reusing lots of helper functions we've written along the way.

Next, a camera. This takes a position, target to look at, and a few other properties, then outputs a nice 4x4 view matrix, and projection matrix. Once uniform buffers are set up, all of that data is sent to the fragment shader on the GPU.

Turns out the depth buffer was not the issue for rendering order. When I hardcoded view and projection matrices I assumed an OpenGL -1 to 1 range, but Vulkan uses 0 to 1 range. Once we rewrite our orthographic matrix projection with that in mind, it works!

This displays the coordinate that we need to sample textures at. Should be simple, but every time we sample a texture our shaders crash. Unfortunatley, debugging shaders can be painful. After banging my head against the wall we can see, SDL puts samplers and textures in resource set 2. HLSL defaults to set 0, unless it is explicitly given. So, our shaders tries to access textures in the wrong place.

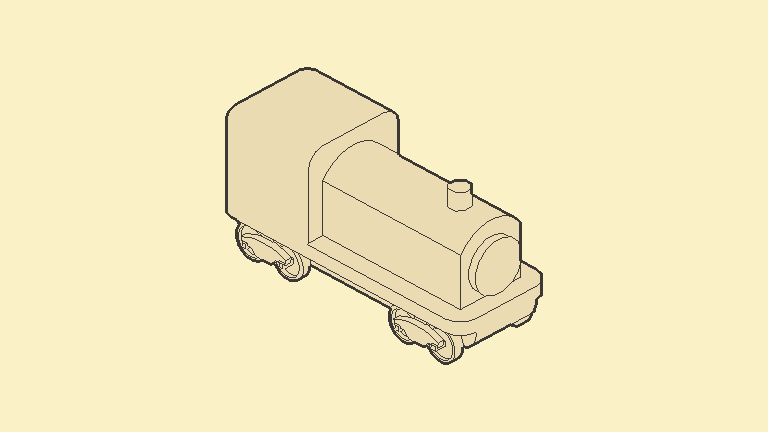

This may look wrong, but thats actually how the train is modelled in Blender.

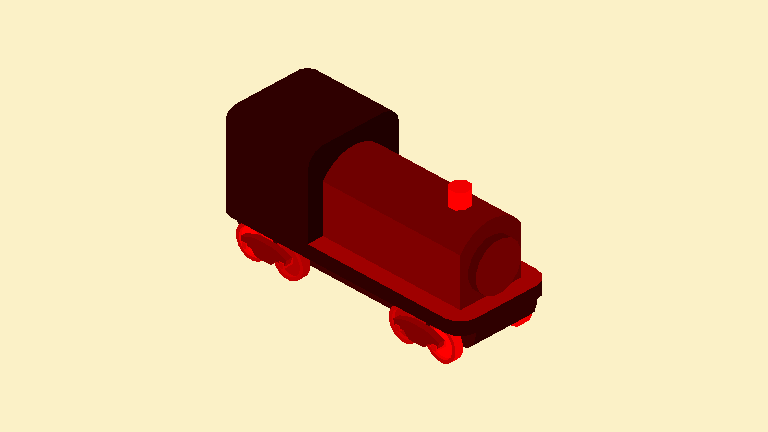

Right now the scene is being rendered to the swapchain, to output to the window. To set up screen space post processing effects, we'll render the scene to a texture, then pass that texture into another shader to do the final edge detection and output to the swapchain texture. Good thing we wrote some reusable functions as we went along, so this is mostly a repeat of setting up the previous shaders, and pipelines.

Once there are multiple objects in the scene, depths will be limited. At that time we'll switch to using object ids for the outlines.

Finally, let's do edge detection on the section map. Use 2px distance for depth edge, and 1px distance for section edge to create a clean outline, with interior details.

Once we add in the spinning camera, the effect really comes to life.

This was a bit of an adventure to get here, but the result is promising. We'll keep iterating as we make the rest of the game. Next time we'll add support for multiple object types, and run some performance tests.

More blog posts coming soon.

TODO

- CTA? Mailing list / twitter?

- Intro on why this is interesting. In paragraph 3, ramble through overview

- Explain why we actually use section maps instead of normals/depths. The limitation it solves

- Explain how edge detection algorithm works